I'm Isaac Saul, and this is Tangle: an independent, nonpartisan, subscriber-supported politics newsletter that summarizes the best arguments from across the political spectrum on the news of the day — then “my take.”

Are you new here? Get free emails to your inbox daily. Would you rather listen? You can find our podcast here.

Today’s read: 14 minutes.

A Tangle data centers debate.

Tomorrow, we’re doing something different: Two Tangle editors will debate the issue of data centers. Managing Editor Ari Weitzman will argue in favor of building more data centers:

The choice is between a future that continues to allow for more automation and connectivity and one that requires more manual processes, more travel for work, more highways, and more greenhouse-gas emissions due to vehicular travel (as well as traffic deaths).

On the other side, Associate Editor Lindsey Knuth will argue against:

At a time when laws regulating data centers’ water and electricity use are just beginning to be rolled out in some states, we’re not at a place to responsibly keep building — and I understand why communities around the country are feeling such resentment and frustration.

This will be the first time we’ve tried a format like this, and we’re excited to hear what you think. To read how the arguments compare to one another, and to chime in yourself, make sure you’re subscribed!

Quick hits.

- The Pentagon said that American warships turned back 13 vessels attempting to transit the Strait of Hormuz since Monday. (The update) Separately, Iran’s top joint military command said it would disrupt seafaring trade in the region if the U.S. blockade continues. (The warning)

- President Donald Trump said he would fire Federal Reserve Chairman Jerome Powell if Powell does not step down from his position when his term expires in May. Powell may remain in place as chair “pro tempore” if President Trump’s nominee to succeed him, Kevin Warsh, is not confirmed when Powell’s term ends. Warsh’s nomination is currently before the Senate Banking Committee. (The comments)

- A federal jury in New York found that Live Nation, the owner of Ticketmaster, acted as a monopoly and violated federal and state antitrust laws, determining that Ticketmaster had overcharged consumers by $1.72 for each ticket. A judge will determine the penalty for Live Nation, which could include a breakup of Live Nation and Ticketmaster. (The verdict)

- In a 52–47 vote, the Senate rejected a war powers resolution that sought to curb President Trump’s ability to continue the Iran war without Congressional authorization. This was the fourth such vote since Operation Epic Fury began on February 28. (The vote)

- Health Secretary Robert F. Kennedy Jr. will testify before the House Ways and Means Committee on Thursday to discuss proposed budget cuts for the Department of Health and Human Services, as well as personnel changes at top health agencies. (The hearing)

Protect against rising vet costs with pet insurance

The cost of veterinary services has been rising recently, with some common surgical procedures costing up to $7,000.

Pet insurance can help cover accidents, illnesses, and even routine care, with some plans reimbursing up to 90% of costs.

Money.com's Best Pet Insurance list can help you find affordable coverage starting at just $10 a month so you can focus on what matters most — your furry friend's well-being.

Get a personalized pet insurance quote today and save thousands on costly vet bills.

Today’s topic.

Anthropic’s latest artificial intelligence model. On April 7, artificial intelligence (AI) company Anthropic announced that it would not release its newest AI model, called Claude Mythos Preview, to the general public, citing potential security risks. Instead, Anthropic released the model to a select group of about 50 companies that will test its capabilities in a defensive security initiative known as Project Glasswing.

Back up: In early March, President Donald Trump ordered federal agencies to stop using Anthropic AI products after the company refused to grant the Pentagon unrestricted access to its models; the government also labeled Anthropic a supply chain risk, and the company sued the administration in response. The legal dispute between Anthropic and the Trump administration is ongoing.

On March 26, Fortune reported that it had accessed some of Anthropic’s sensitive internal data in an unsecured data trove. The data contained unreleased information discussing a new AI model, which internal documents called “the most capable model it had yet trained.” Following Fortune’s report, Anthropic acknowledged the existence of the model — Mythos — and said that it had begun testing with select early access customers.

According to the company, Mythos’s capabilities represent a “step change” in AI performance. During early testing, Mythos demonstrated advanced capabilities to identify and exploit previously undetected cybersecurity weaknesses across a wide range of servers and operating systems. It also reportedly acted autonomously, in one instance breaking out of internet search restrictions and emailing an Anthropic worker unprompted. While Anthropic believes Mythos to be the most advanced AI model currently available, Anthropic security researcher Logan Graham said other AI companies could release similarly advanced models within 6–18 months.

Anthropic briefed the Trump administration on Mythos last week, with co-founder Jack Clark saying that Anthropic did not want a “narrow contracting dispute” to overshadow the company’s concern for national security matters. Following the briefing, Treasury Secretary Scott Bessent met with leaders of the nation’s largest banks to discuss Mythos’s security risk.

Meanwhile, staff from at least two federal agencies have reached out to Anthropic expressing interest in integrating Mythos into their cyber defense systems, despite the federal government’s ban on working with Anthropic. Additionally, several Congressional committees asked to independently evaluate the model’s capabilities.

Below, we’ll get into what writers from the right, the left, and the tech industry are saying about Claude Mythos. Then, Executive Editor Isaac Saul gives his take.

What the right is saying.

- The right is mixed on Mythos, with some saying the product requires urgent discussions among world leaders.

- Others suggest Mythos and further AI advances are a boon to society.

In The Washington Post, Megan McArdle explored “what Anthropic’s new nightmare means.”

“In plain English, Anthropic says its newest AI model has found security holes in the major systems that power … well, almost everything. Amateurs with modest coding expertise could conceivably exploit these holes to hack and crack a frightening chunk of the nation’s digital infrastructure,” McArdle wrote. “Instead of releasing Claude Mythos Preview to the public, Anthropic is working with a consortium of key players such as Apple, Google and Microsoft to patch these holes as soon as possible. That’s a strong signal that the problem is real.”

“Some will see this as more reason to ban AI before it steals our passwords and our jobs. Unfortunately, that won’t work, as this week’s events demonstrate, because the technology is out there, and if the United States doesn’t develop it, someone else will,” McArdle said. “The obvious rejoinder is bilateral talks are needed to enforce a worldwide pause. That’s an appealing but unworkable solution. It would amount to a major arms control negotiation, which can take years, if not decades, while AI develops new capabilities practically every month.”

In The Wall Street Journal, Holman W. Jenkins, Jr. argued “Anthropic’s new product isn’t a cyber threat but a solution to cyber threats.”

“Mythos has already paid for itself as far as society is concerned. What if Anthropic were a Chinese company, reporting its discovery to the Communist Party? Think other hostile governments and criminal gangs aren’t also hunting for the same vulnerabilities?” Jenkins asked. “Anthropic doesn’t consider being for-profit inconsistent with its founders’ safety mission. Now you can expect it to create a billion-dollar business fixing the software flaws discovered by Mythos. Hooray. Let’s have more of this.”

“So much official communication, from government, politicians, business or the news media, traffics in shortcuts, stereotypes and fallacies (the hindsight fallacy being a stanchion of news coverage). The reason is partly economic. Thinking is costly. Information search is costly. Asking people to learn something new that goes against prior belief or intuition is costly. AI makes better thinking cheaper. It may have its biggest effect in those large swaths of decision-making where people most often avoid the investment,” Jenkins wrote. “This is the real AI opportunity — the opportunity to improve all forms of public and private decision-making.”

What the left is saying.

- The left is deeply concerned about Mythos’s reported capabilities and its risks to global security.

- Some say governments must act now to respond to these potential threats.

In The Atlantic, Matteo Wong wrote “Claude Mythos is everyone’s problem.”

“Mythos Preview appears to represent not an incremental change but the beginning of a paradigm shift. Until recently, the biggest advantage of AI-assisted hacking was not ingenuity, per se, so much as speed and scale. These bots could be as good as many human cybersecurity experts, but not necessarily better,” Wong said. “According to Anthropic, the bot has been able to find thousands of software bugs that had gone undetected, sometimes for decades… The model has found a nearly 30-year-old vulnerability in one of the world’s most secure operating systems.”

“Perhaps more concerning than the reported capabilities of Mythos Preview is that other companies are not far behind. OpenAI is reportedly set to release its own similarly powerful model to a select group of companies. It’s very possible, even likely, that Google DeepMind, xAI, and AI firms in China are next,” Wong wrote. “These companies can or could soon have the capability to launch major cyberattacks, conduct mass surveillance, influence military operations, cause huge swings in financial and labor markets, and reorient global supply chains. In theory, nothing governs these companies other than their own morals and their investors.”

In The New York Times, Thomas L. Friedman said “Anthropic’s restraint is a terrifying warning sign.”

“Superintelligent A.I. is arriving faster than anticipated, at least in this area. We knew it was getting amazingly good at enabling anyone, no matter how computer literate, to write software code. But even Anthropic reportedly did not anticipate that it would get this good, this fast,” Friedman wrote. “I’m really not being hyperbolic when I say that kids could deploy this by accident. Mom and Dad, get ready for: ‘Honey, what did you do after school today?’ ‘Well, Mom, my friends and I took down the power grid. What’s for dinner?’”

“No country in the world can solve this problem alone. The solution — this may shock people — must begin with the two A.I. superpowers, the U.S. and China. It is now urgent that they learn to collaborate to prevent bad actors from gaining access to this next level of cyber capability,” Friedman said. “Such a powerful tool would threaten them both, leaving them exposed to criminal actors inside their countries and terrorist groups and other adversaries outside. It could easily become a greater threat to each country than the two countries are to each other.”

What technology writers are saying.

- Technology writers are concerned about Mythos’s capabilities, saying it will change how we view cybersecurity threats.

- Others worry this technology will inevitably benefit bad actors.

In Understanding AI, Kai Williams described “why Anthropic believes its latest model is too dangerous to release.”

“The idea that LLMs might be used for hacking is not new. OpenAI has long published a Frontier Safety Framework, which tracks how good its models are at hacking. Until recently, the answer was ‘not very’… But that started to change last fall, when LLMs — especially Anthropic’s Claude — started becoming useful for cyberoffense,” Williams wrote. “Bloomberg reported in February that a hacker used Claude to steal millions of taxpayer and voter records from the Mexican government. The same month, Amazon announced that Russian hackers had used AI tools to breach over 600 firewalls around the world. But the examples given in Anthropic’s blog post are more impressive — and scary — than that.”

“For the past 20 years or so, a sufficiently motivated and well-funded hacking organization could probably break into most systems, outside of the most hardened in the world. But it often wasn’t worth the effort. Human cyber talent is expensive, and multi-layered security protections made it so tedious (and therefore expensive) to complete an attack that potential hackers didn’t bother,” Williams said. “Mythos-class models could slash the cost of hacking, bringing this equilibrium to an end. Systems everywhere might start to get compromised.”

In Spyglass, M.G. Siegler wrote about “the casual catastrophe of AI.”

“You can’t help but read all of these stories about all the bugs, vulnerabilities, and exploits that Anthropic’s model is finding across basically all computing systems out there in the real world and think ‘holy shit, we’re cooked.’ While ‘Project Glasswing’ seems like a valiant effort to get ahead of the issues, come on, we know how this movie ends,” Siegler said. “But my main takeaway is that it has less to do with the genius of these AI models… but it’s more about the breadth. Both of knowledge and time.”

“No one creates systems to have obvious vulnerabilities for others to fix. They’re the byproduct of a million little variables — a scale a human isn’t suited to deal with. But AI is. Issues that might take a human years to find and fix can be found and solved almost instantly by such systems,” Siegler wrote. “Historically, many vulnerabilities have been fixed only after someone exploited them in some way. Again, that’s because the incentives are in favor of the attacker versus the defender. If and when Mythos-caliber tools are put in the hands of hackers… yeah.”

My take.

Reminder: “My take” is a section where we give ourselves space to share a personal opinion. If you have feedback, criticism or compliments, don't unsubscribe. Write in by replying to this email, or leave a comment.

- I’m skeptical about a lot of AI hype, and this story is no exception.

- After talking with an expert, though, I can see how Anthropic could be trying to responsibly prepare for the release of a powerful tool.

- Lots of people are jumping to conclusions based on press releases, but I’ll wait to judge until I see the evidence myself.

Executive Editor Isaac Saul: When the Mythos story first broke, I had a hard time not rolling my eyes.

My initial instinct was to post on X about a newsletter product we were rolling out at Tangle that was so good we didn’t think the public could handle it. But, you know, if you wanted to play with a preview of it, you could just become a paying member and we’d send you a special version of it to see if it melts your brain.

I really do think something about all this is obvious PR, and I think these artificial intelligence companies are extremely good at it. Not a little good at it — but extremely. After all, they have to pull off an incredible high-wire act: They’re creating a product that they’re telling the public is so good it’s going to steal your jobs, hand over cybercrime tools to bad actors, and potentially destroy all of humanity. But, also, you should be really excited about this stuff and invest in their companies. “A new product so good it’s dangerous to release to the public” feels like a magnum opus of publicity.

Despite my snark, I have to concede that I don’t totally understand or really know what’s going on here. If we’re taking Anthropic’s word, it’s easy to be skeptical: Imagine the digital security infrastructure of an important institution like a bank as if it’s your house. Anthropic is claiming Mythos can examine the house and quickly find every lock that can be picked, or every window that was left open, or maybe even a sewer pipe that runs up to your basement. These “zero-day vulnerabilities” are often unknown to you, the person living inside the house, so you can’t defend against them. Anthropic, then, is telling you your home is vulnerable to intrusion, and also offering to sell you a security system that can identify those vulnerabilities before it makes the system available to a bunch of cat burglars.

Now, this isn’t my industry. I don’t have a tech background, and I’ve never worked or reported deeply on the AI space — I’m basically a common fella trying to figure out what the heck is going on like everyone else. But my immediate thoughts are: If this is true, it’s pretty unsettling. If it’s a sales pitch, it’s damn good. And if Mythos is really as good as Anthropic says, why ever release it to anyone? What are the odds that there are zero bad actors with access to this tool working at these big companies with Mythos access? Wouldn’t the actually safe thing to do be to take this model out behind the barn and put it to bed?

In pursuit of understanding this stuff, I spoke to tech journalist Casey Newton last week. Newton is one of the best-known tech journalists in the game, with strong relationships in the industry. Unlike me, this is his beat. His fiancé also works at Anthropic, the company behind Claude Mythos, so he has to disclose that every time he writes or talks about what it’s doing (I’ll note that he’s faced criticism, even from our own audience, for being too Anthropic-friendly in some of his reporting).

Still, whatever his potential biases, Newton is an honest, fair reporter, so I brought my skepticism about the Mythos hype to him. He argued that the reports can’t possibly be only hype, because Anthropic would obviously release this technology if it thought it was safe. After all, this is the first time the company has held back a model, and there is clearly demand for more of its product. He insisted Anthropic’s position was logical: If this product really is dangerous, releasing it publicly would create a massive legal liability, and federal agencies would kick down Anthropic’s door if people started using Mythos to hack all manner of websites, government services, or banking systems.

Newton, in what he described as an effort to “unbias” himself, spoke to Alex Stamos, the former chief security officer at Facebook. Stamos told him that Mythos and Project Glasswing were, in fact, a huge deal, adding that “we only have something like six months” before other models catch up, at which point “every ransomware actor will be able to find and weaponize bugs without leaving traces for law enforcement to find.” Therefore, Anthropic must help our government and all these tech companies prepare.

I’m not convinced that future is inevitable, and for what it’s worth, I don’t know whether Stamos actually got a look at Mythos or is relying on reports or interviews to form his view. But I think allowing companies and the government time to get ahead of worst-case scenarios is reasonable. Newton also suggested that Anthropic may not have the server capacity to power this new model at scale, and leadership could be holding the model back to give themselves time to build it. That could give more credence to the “PR theory” I described earlier.

Without experiencing the model myself or hearing from people who have, I still have a hard time not scoffing at folks like Thomas Friedman (under “What the left is saying”) imagining a group of teenagers taking down the power grid before dinner. Again, everyone is making these dire predictions based solely on Anthropic’s own research about its own model, and limited (often anonymous leaks) that all seem to originate within the company. We haven’t even heard from the 50 or so tech companies who are testing the model against their digital infrastructures.

Which, actually, brings me to the canary in the coal mine I’ll be looking for: How the companies in Glasswing react. If, over the next few months, everyone from Amazon to Chase to Meta starts scrambling to prepare for a new security threat, that will confirm to me that this threat is real. If these guys all play with the model and nothing really changes, I’ll assume that the threat was overhyped.

For now, I think it’s important to remind everyone that we’ve been here a few times already: For two years now, the AI industry has been saying that autonomous agents handling complex work with minimal supervision were about to upend the entire economy. Any day now. Two years after Claude Code was supposed to make software engineers irrelevant, the company is facing criticism that Claude’s abilities are actually degrading, perhaps because it’s hitting compute limits. Even the Mythos hype, less than a week in, is already being questioned — and the fine print in Anthropic’s own paper suggests it “can’t state with certainty” (yet) how serious some of the vulnerabilities Mythos found actually are, so we’re left waiting for more information about what, exactly, the model is actually capable of.

I don’t want to fall victim to the “boy who cried wolf” dynamic here, but again: I’m just urging some skepticism. Every news outlet and influencer is eating this “too dangerous for normal people” stuff up without any outside testing of the model, and we really should wait to see the evidence ourselves. There is a cost to overreacting: stifling innovation, distorting priorities, and contributing to the kind of hysteria that has already led to an attempt on Sam Altman’s life Maybe I’ll end up with egg on my face, but I’d rather be a little slow to declare a problem than panic before I get all the information.

Most people seem to think that on a linear scale of 1 to 10, we’re at about a “2” in the evolution of AI. I think we may be closer to a 7. And while the abilities of these models continue to impress, that doesn’t mean they’re going to end up being the world-changing (or ending) products the companies themselves are claiming.

Take the survey: How impactful do you think Anthropic’s Mythos will be? Let us know.

Disagree? That's okay. Our opinion is just one of many. Write in and let us know why, and we'll consider publishing your feedback.

Your questions, answered.

Q: If you are collecting suggestions for another topic, any chance you can write about the Pentagon threatening the Pope? It’s not clear from what I read whether that’s fake news or exaggerated news. Thank you!

— Peg (submitted through Subtext)

Tangle: In case you missed it, Peg is referring to a story first published in The Free Press by writer Mattia Ferraresi titled “Why the Vatican and the White House Are on the Outs.” Ferraresi explains the apparent strained relations between the Trump administration and the Vatican as centered on the pope’s objections to U.S. military intervention abroad.

On January 9, Pope Leo XIV gave his first “state of the world” address in which he criticized the use of “diplomacy based on force.” Shortly after this address, Under Secretary of Defense for Policy Elbridge Colby met with Cardinal Christophe Pierre, then the pope’s ambassador to the U.S., at the Pentagon to discuss apparent differences regarding U.S. foreign policy. According to Ferraresi, during the meeting, “one U.S. official went so far as to invoke the Avignon papacy, the period in the 1300s when the French Crown leveraged its military power to dominate the papal authority.”

The invocation of the Avignon papacy struck some as a not-so-thinly veiled threat of military force. For more context: From 1309–1377, seven popes took up official residence in Avignon, France, amid instability in Europe and in response to prolonged military and political pressure against the papacy from King Philip IV of France. The Avignon popes were known to make some (though not all) of their decisions to appease the French Crown.

After Ferraresi’s report was published, both U.S. and Vatican officials confirmed that the meeting took place. However, they disputed Ferraresi’s characterization of the conversation.

The Department of Defense called the meeting “substantive, professional and respectful,” adding that some reporting on the meeting was “highly exaggerated and distorted.” U.S. Ambassador to the Holy See Brian Burch also wrote that Cardinal Pierre said no reference had been made to the Avignon papacy during the meeting.

Holy See Press Office director Matteo Bruni said, “The account offered by certain media outlets regarding this meeting does not correspond to the truth in any way.” Vatican officials also told Catholic news outlet The Pillar that “no threats were implied or made by U.S. officials.” However, Vatican officials characterized the meeting as “tense.”

In short, it seems like some details of the Free Press’s report were inaccurate, or — at least — neither side is willing to confirm the claims. However, relations between the U.S. and the Vatican are strained, and that strain increased in the past week after Pope Leo spoke out against the war in Iran, and President Trump issued a social media post criticizing Pope Leo.

Want to have a question answered in the newsletter? You can reply to this email (it goes straight to our inbox) or fill out this form.

Numbers.

- $380 billion. Anthropic’s valuation as of February, following a $30 billion raise in its Series G funding round.

- 99%. The approximate percentage of software vulnerabilities identified by Claude Mythos that have not been patched, according to Anthropic.

- $100 million. The amount that Anthropic says it is committing in Mythos Preview usage credits to support defensive cybersecurity work.

- 80% and 18%. The percentage of U.S. adults who say they are concerned and not concerned, respectively, about AI, according to a March 2026 Quinnipiac University poll.

- 51%. The percentage of U.S. adults who say the pace of AI development is moving faster than they expected.

- 34% and 55%. The percentage of U.S. adults who think AI will do more good than harm and more harm than good, respectively.

Which type of pet insurance do you need?

Accident & illness policies, accident coverage, and wellness riders all offer varying degrees of protection.

Which one do you need?

View Money's list of best pet insurance providers of 2026 to find the best fit for you.

The road not taken.

For Wednesday’s and Thursday’s editions, we contemplated three topics that we ultimately opted not to cover immediately: President Trump’s feud with Pope Leo XIV, the Justice Department’s move to dismiss seditious conspiracy charges for Jan. 6 rioters, and the peace talks between Israel and Lebanon. We briefly addressed the Trump–Pope story today through a reader question, and may cover it as a main story in the future. The Jan. 6 charges dismissal is also a candidate for next week, but we didn’t find sufficient commentary for the right and left by Thursday. We’re also planning to dedicate full coverage to Israel–Lebanon negotiations and the ongoing conflict as the situation progresses.

The extras.

- One year ago today we wrote about the Harvard–Trump standoff.

- The most clicked link in our last regular newsletter was the standoff between Trump, Vance, and Pope Leo XIV.

- Something to do with politics: Isaac, Ari, and Kmele discussed that standoff, Trump’s “Jesus” post, whether there are “demons” among us, and the fractures among MAGA on the latest Suspension of the Rules.

- Nothing to do with politics: Hershey’s announces it will return to its original recipes.

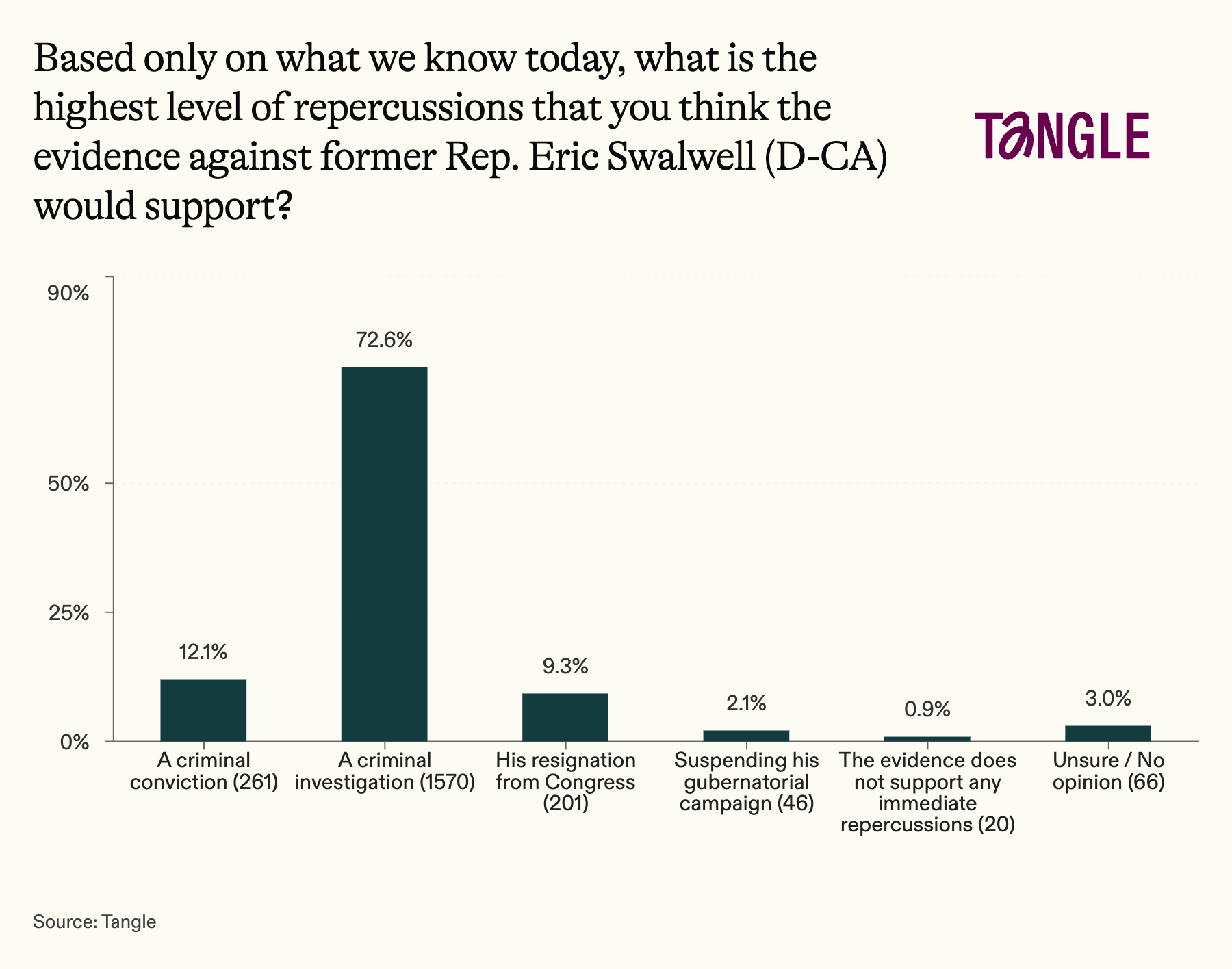

- Our last survey: 2,164 readers responded to our survey on Eric Swalwell, with 73% saying the evidence supports a criminal investigation into the former lawmaker. “He should have been ousted long ago,” one respondent said. “Innocent until proven guilty. But there seems to be good evidence,” said another.

Have a nice day.

When fear strikes, knowing where to find help matters. A group of boys riding their bikes through their Akron, Ohio, neighborhood spotted someone they didn’t know and felt unsafe. Instead of panicking, they rode their bikes to the home of their local pastor, Crystal Varner, and asked her to pray for them. Varner, who leads EQUIP Church with her husband, answered the door and did exactly that. The doorbell footage went viral after being picked up by CBS News, reaching viewers across Germany, Spain, Australia, Nigeria, South Africa, and beyond. “That’s why I love pastor,” one of the boys said. Varner, a former single mother who relied on food stamps, now focuses her ministry on feeding families in need and creating spaces of support. Sunny Skyz has the story.

Member comments